Websites have learned to communicate with browsers, and then began to interact with search engines. Cloudflare now believes that websites need to learn to communicate with AI agencies, and offers a tool to help with this. This is an ambitious initiative, but it raises more questions than it provides answers.

Highlights:

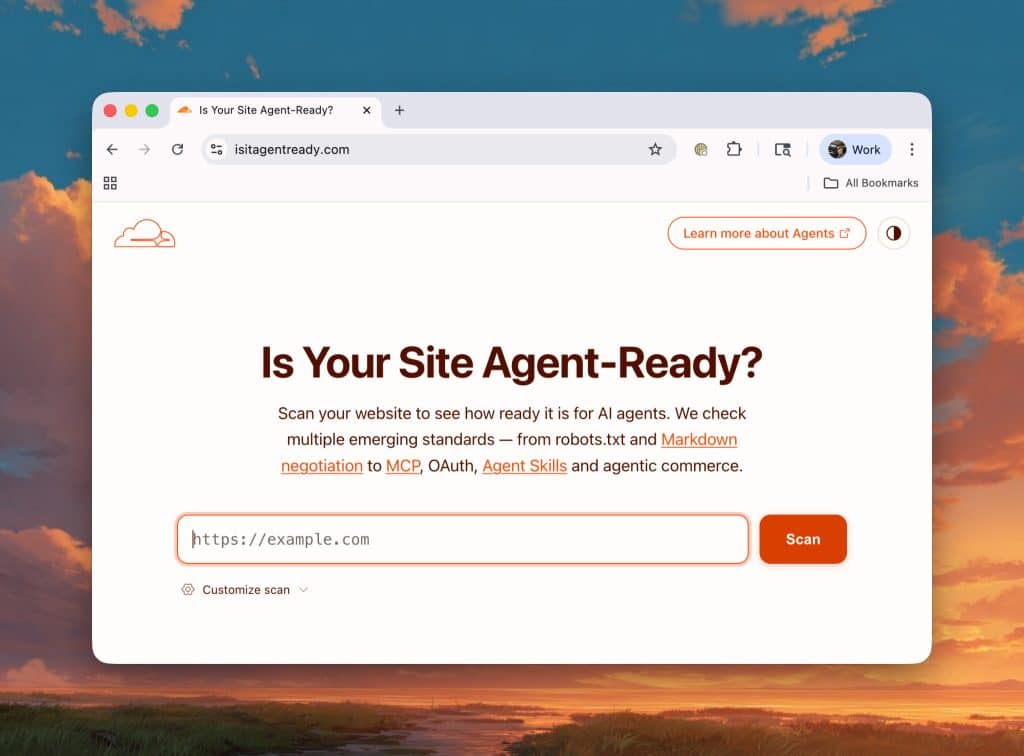

- Cloudflare launched a free tool called isitagentready.com that evaluates the compatibility of websites with AI agencies across four dimensions: discoverability, content, access control, and capabilities.

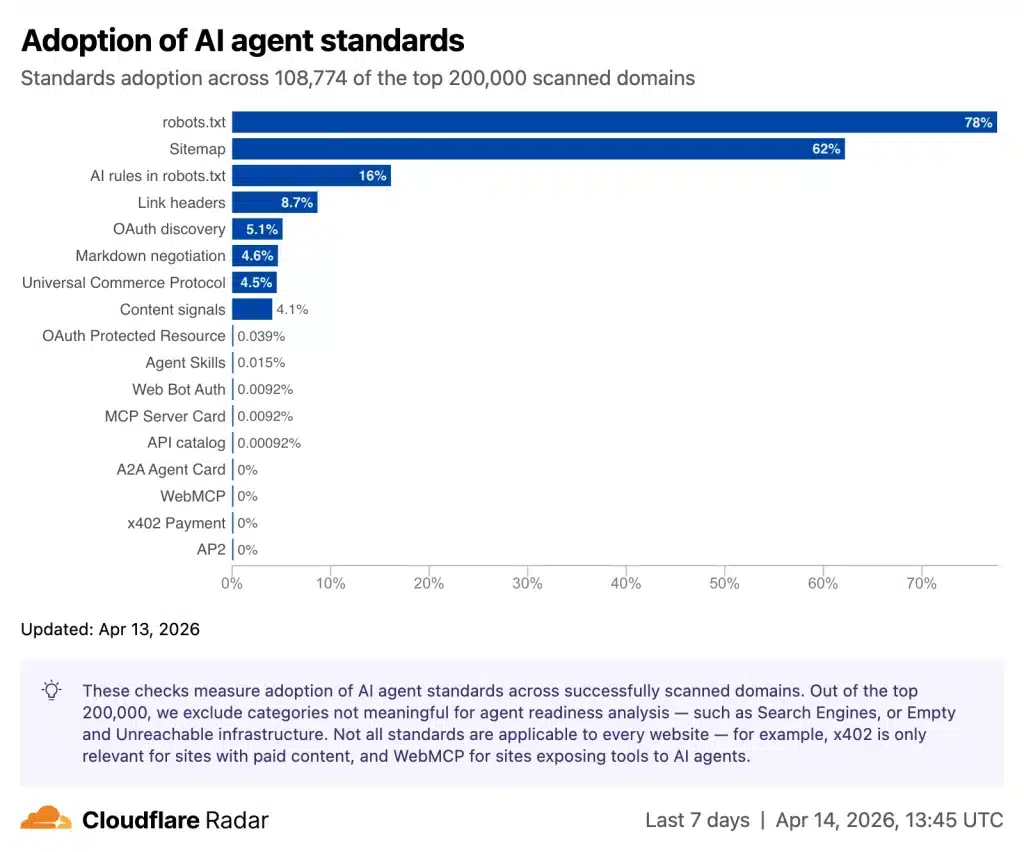

- The web is not ready yet: Only 4% of the 200,000 analyzed websites report their preferences for AI usage, and fewer than 15 sites have adopted the latest standards such as MCP Server Cards or API Catalogs.

- The initiative relies on an evolving standard ecosystem, which exposes early adopters to the risk of fragmentation or rapid obsolescence.

- Cloudflare is in a position to both determine the score and provide solutions for improvement, which raises questions.

A Scoring Tool for a Real Problem

Cloudflare's starting point is solid. When an AI agency wants to access a website to read documentation, purchase products, or interact with an API, it encounters an infrastructure designed for humans: heavy HTML, forms, sessions, captchas. As a result, slow, token-costly, and often erroneous agencies emerge.

Cloudflare measured the scale of the problem by scanning the 200,000 most visited domains and filtered out redirects, ad servers, and tunnel services, focusing on sites that agencies could reasonably interact with.

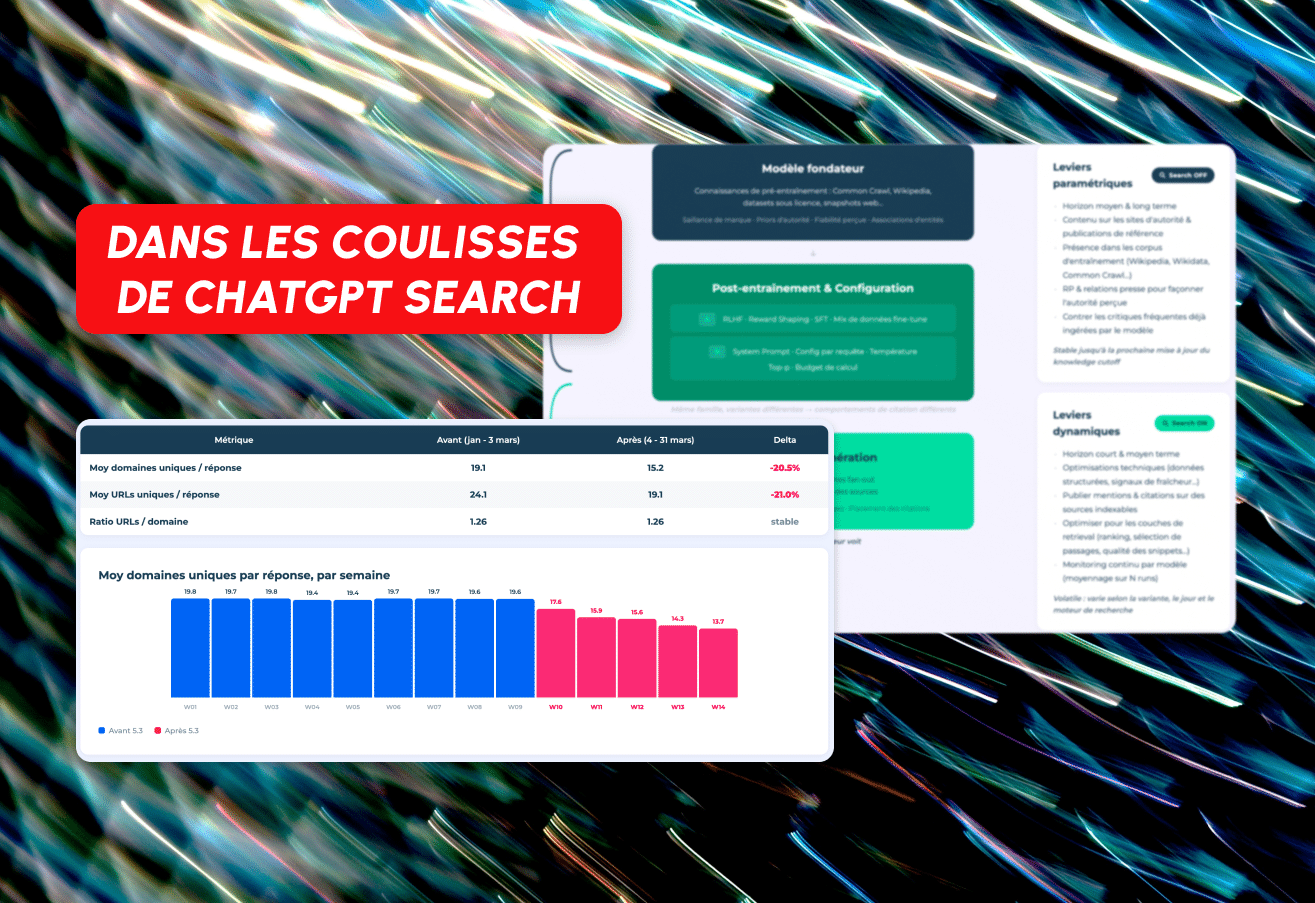

The result is striking: 78% of sites have a robots.txt file, but almost all of these are written for traditional search engine crawlers, not for agencies. Only 3.9% provide content in Markdown format when requested. And new standards like MCP Server Cards are available on fewer than 15 sites across the entire dataset.

The solution promoted by Cloudflare through isitagentready.com offers a score structured around four axes.

- Discoverability checks the presence and quality of

robots.txt,sitemap.xml, and Link Headers. - Content evaluates whether the site offers a clean Markdown version suitable for an agency's request.

- Access Control looks at whether the site expresses clear preferences regarding what AI can do with its content.

- Finally, Capabilities tests the presence of more advanced standards like MCP Server Cards, API Catalogs, or OAuth discovery, which allow agencies to authenticate properly.

Emerging Standards and Almost Nonexistent Adoption

Here, Cloudflare's excitement should be tempered. Many of the prominent standards at this point are either in draft form within the IETF or are informal proposals with no guarantee of general acceptance. API Catalog (RFC 9727), MCP Server Cards, or Web Bot Auth are new standards that have not yet achieved definitive RFC status at the time of publication.

This situation is not unique to Cloudflare: this is the reality of a web in an evolutionary phase. However, it requires a level of honesty that was downplayed in Cloudflare's blog post. Considering that a standard adopted today may be reorganized or abandoned within eighteen months, this could create a workload that requires reintegration. While major players have the resources to keep up with these evolutions, this may not be the case for smaller teams or independent developers.

The example of llms.txt is noteworthy. Proposed in September 2024, this standardized file for introducing a site to an LLM is not included by default in Cloudflare's score, only as optional. The reason? The standard is still under discussion. This is a cautious decision, but it also shows that Cloudflare does not yet know what risks it should take.

Markdown Content Discussion: A Measured Real Gain

One of the most concrete aspects of the initiative, and likely the most immediately beneficial, is the ability of a server to respond in Markdown format when an agency sends an Accept: text/markdown header. Cloudflare claims to have measured an up to 80% reduction in the number of tokens required to read a page.

The context of this figure is important. The HTML of a technical documentation page is often excessive: navigation, menus, scripts, nested tags... all of these create pure noise for an LLM. A well-structured Markdown file is the unwrapped essence of the content. The direct result is a decrease in the cost of API calls for agencies, reduced latency, and an increased likelihood of obtaining full context without interruption.

Cloudflare explains that it tested its own documentation site (developers.cloudflare.com) and directed an agency (via Kimi-k2.5 OpenCode) to several technical sites. The result: 31% less token consumption and 66% faster accurate responses compared to other unoptimized sites. These figures should be approached with caution as they stem from unverified internal testing conditions. However, the order of magnitude is consistent with what is known about the structural excesses of HTML.

Technical Implementation in Cloudflare Docs: Practical and Repeatable

The most instructive part of the article is how Cloudflare restructured its own documentation. The approach is interesting because it overcomes a real problem: of the seven tools tested in February 2026, only three (Claude Code, OpenCode, and Cursor) automatically send the Accept: text/markdown header. An alternative is required for the others.

The chosen solution combines two Cloudflare rules:

- A URL rewrite transforms the request

/r2/get-started/index.mdinto/r2/get-started/, - And automatically adds the

Accept: text/markdownheader to these rewritten requests.

Result: Any agency can access the Markdown version of any page by appending /index.md to the URL, without the need to manage a special header.

Another noteworthy decision: instead of a single massive llms.txt file (Cloudflare documentation contains over 5,000 pages), each first-level directory has its own file, and the root file points to these subdirectories. This prevents the grep loop described in the article: an agency encountering a very long file begins to lose the outline, searches with keywords, increases calls, and decreases response quality.

Granularity was also carefully considered: About 450 pages consisting solely of link lists (directory pages) were excluded from llms.txt, as they do not add any semantic value for an LLM for the already individually listed subpages.

A Scoring Player Selling Score Preparation

Cloudflare's position requires careful examination. The company publishes a reference score for agency readiness, integrates this score into the URL Browser, provides ready-made templates to correct each failure point... and sells products (Workers, Rules, Access) to implement these corrections. isitagentready.com is also offered by Cloudflare and hosts an MCP server.

This is not necessarily problematic: Google did the same with Lighthouse and Core Web Vitals and became a provider that offers tools for both judging and improving performance (through Google Cloud, Firebase, etc.). However, this means that the criteria for the score could evolve according to the company's commercial interests as much as the real needs of agencies. A standard supported by a single company, even with good intentions, could influence the guidance.

Additionally, it is noteworthy that Cloudflare actively promotes standards related to agency payments (x402, Universal Commerce Protocol); some of these standards include direct partners like Coinbase. These standards are not yet included in the score, but their presence in the tool already indicates a direction.

Things Developers Can Actually Do Today

Despite these reservations, many actions provide clear and immediate feedback, independent of the evolution of standards:

- Providing Markdown on demand is technically simple, reduces costs for API consumers, and improves response quality for agencies. This is a priority.

- Editing

robots.txtfor AI agencies (for example, by adding directives for crawlers likeGPTBot,ClaudeBot,CCBot) is good hygiene and cost-free, clarifying access rights. - Structuring

llms.txton a section basis for sites with a lot of content is a good documentation practice for both agencies and people wanting to quickly understand a site's architecture.

On the other hand, implementing MCP Server Cards or API Catalogs for a site that does not yet have a public API or a clear agency use case is equivalent to building a waiting room without visitors.

Adoption as a Market Indicator, Not a Necessity

The true value of Cloudflare's initiative may lie in the Radar dataset: A dataset that tracks the adoption of each standard weekly for the 200,000 most visited sites, segmented by domain name categories. Such data will allow measuring whether standards truly provide gains or whether most sites remain passive, expecting agencies to adapt to them, just as they have adapted to HTML for thirty years.

The answer to this question will say a lot about the power dynamics between site publishers and agency developers. As the most popular agencies gain sufficiently robust HTML parsing capabilities, the pressure on sites to adapt will decrease. Conversely, if the costs and durations of unoptimized HTML consumption become a measurable competitive advantage for compliant sites, adoption will naturally increase.

Comments

(6 Comments)