In a single day, the Paris-based startup made three major announcements confirming its goal of becoming an indispensable building block in global AI infrastructure. Here are the concrete effects of this situation.

Key Takeaways:

- Mistral Small 4 combines reasoning, multimodal, and code tools into a single model for the first time, under the Apache 2.0 license.

- Mistral joins NVIDIA's Nemotron coalition as a founding member alongside Perplexity, Cursor, and Black Forest Labs.

- Leanstral is the first open-source agent capable of generating formal proofs for Lean 4, and its claimed cost/performance ratio is 15 times higher than that of general competitors.

- These three announcements indicate that Mistral is repositioning itself not just as a national alternative but as a global reference player.

Small 4: A model that changes three distributions

Until now, technical teams wanting to use Mistral models had to choose Magistral for reasoning, Pixtral for image processing, and Devstral for code tooling. Mistral Small 4 puts an end to this fragmentation.

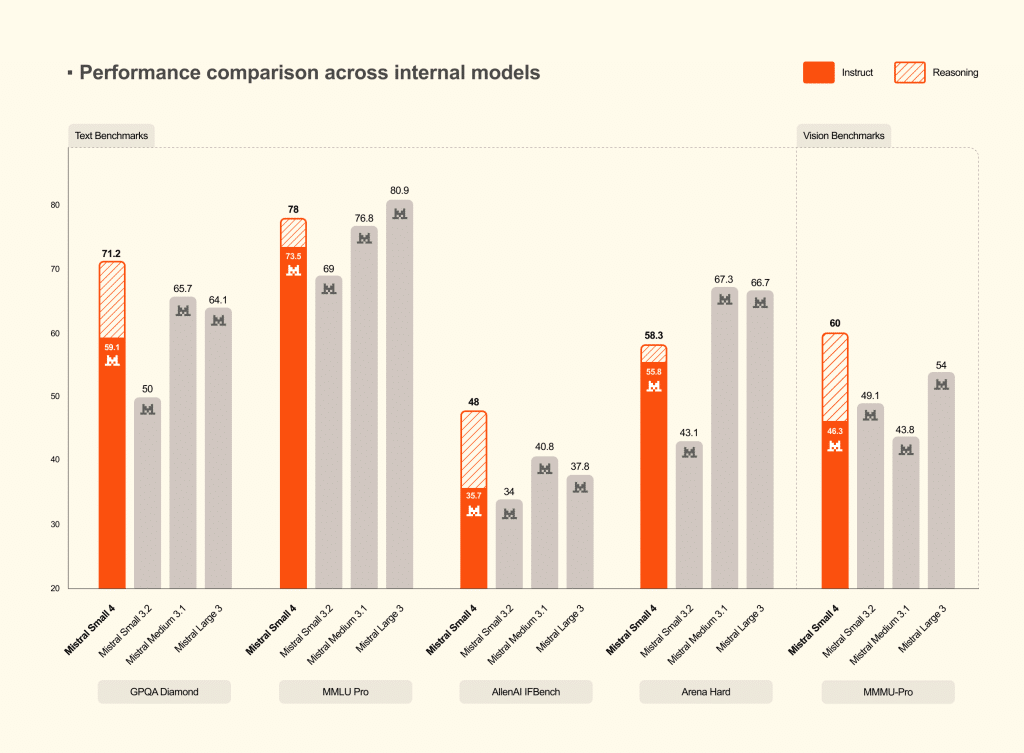

The model is based on a Mixture of Experts (MoE) architecture with a total of 119 billion parameters, but only 6 billion are active for each query. This way of working allows for limiting computation costs per inference while maintaining overall capacity. Compared to Small 3, Mistral offers 40% lower latency and three times more queries per second in an optimized configuration.

The context window reaches 256,000 tokens, allowing for processing long documents without truncation. The model accepts both text and images as input.

The most notable addition for professional use is the reasoning_effort parameter. It allows the user to choose between a quick and lightweight response equivalent to Small 3's behavior and a more in-depth, step-by-step analysis. Thus, a single distribution meets needs that previously required three.

In benchmarks, Mistral reports that when reasoning is enabled, Small 4 matches or exceeds GPT-OSS 120B and produces significantly shorter outputs. Shorter outputs mean less latency, lower inference costs, and a better user experience in production.

The model is available as the Mistral API, optimized NVIDIA NIM container for Hugging Face and on-premise deployments. It is published under the Apache 2.0 license and is compatible with popular inference frameworks: vLLM, llama.cpp, SGLang, and Transformers.

Nemotron coalition: Mistral at the big table

This is probably the most strategic announcement of the day. Mistral joins NVIDIA's Nemotron coalition as a founding member alongside Cursor, Perplexity, Black Forest Labs, and the Mira Murati lab.

The aim of this coalition is to co-develop an open-source base model, Nemotron 4, which will be trained on NVIDIA's DGX cloud. The model will then be released as open-source for customization by the entire industry. Each member brings complementary expertise such as multilingual and model architecture for Mistral, research for Perplexity, orchestration for LangChain, and multimodality for Black Forest Labs.

For Mistral, this means access to NVIDIA's computing infrastructure, allowing it to train models at a scale it could not finance on its own. In return, the startup offers training techniques, multimodal capabilities, and fine-tuning tools for businesses.

The industrial logic is clear. Against the closed ecosystems of OpenAI and Google, this coalition positions itself as a coordinated force for the open-source camp. For NVIDIA, an optimized reference model for its chips mechanically increases hardware demand.

This alliance is not without tension. The DGX cloud where Nemotron 4 will be trained belongs to NVIDIA. Mistral understood this well: the company is investing in its own computing capabilities in France and Sweden, ensuring that this partnership remains a strategic choice and does not become a mandatory dependency. The question of technological sovereignty is still on the agenda: Mistral is strengthening its ties with an actor subject to U.S. overseas regulations.

Leanstral: Proving AI is correct

The third announcement is the most technical. It addresses a fundamental issue regarding the agency, although it targets a limited audience today.

The problem Leanstral is trying to solve

When an AI agency generates code today, the result is probabilistic. The code appears correct, passes tests, but there is no absolute guarantee. Verification relies on humans; they read, test, and correct it again. As agencies produce faster, this human bottleneck becomes critical.

There is a solution to this problem: formal proof. A proof assistant like Lean 4 allows a developer not only to write a program but also to write its mathematical proof. If the proof is valid, it guarantees that the code is correct; not "probably correct," not "correct in tested cases," but mathematically correct. Lean 4 is used by mathematicians to formulate complex proofs and by engineers to certify critical software.

The issue is that writing proofs in Lean 4 is a specialized, slow, and laborious task. This is the gap that Leanstral is trying to fill.

What Leanstral concretely does

Leanstral, an AI agency with 6 billion active parameters, is specifically trained to produce formal proofs. It does not just generate code: it also produces the proof that certifies this code. Lean 4 then verifies this proof. If it is invalid, it is rejected. The verifier cannot be corrupted.

This is the first open-source agency of its kind for Lean 4. Existing systems either build layers around general models or are limited to isolated mathematical problems. Leanstral is designed to work in real formal pools, with a sparse architecture and optimizations specific to proof tasks.

Benchmarks and limitations

In FLTEval, in its own evaluation sets, Leanstral scores 26.3 points for $36 (pass@2), while Claude Sonnet scores 23.7 points for $549. At pass@16, Leanstral scores 31.9 points, leaving Sonnet 8 points behind. Claude Opus 4.6 is in the best position in terms of absolute quality with 39.6 points, but at $1,650, which is 92 times more expensive.

These figures require careful reading. FLTEval is an internal benchmark that is not repeatable by third parties. The cost comparison between a private model with 6 billion active parameters and large general models is not neutral: it is a comparison between a tool optimized for a specific task and a Swiss army knife. Leanstral's ability to go beyond the evaluated mathematical FLT project has yet to be proven. Finally, all tests have been conducted in the Mistral Vibe environment.

Why it matters beyond mathematics

The real issue is trust in autonomous agencies. Today, an AI agency writes code and processes data without verification of each line. An agency that can formally prove exactly what the code is supposed to do changes the situation: humans specify the expected outcome, the machine proves that it produces it. Auditing shifts from line-by-line verification to the definition of specifications.

Leanstral is immediately available through Mistral Vibe (command /leanstall), via a free API (labs-leanstral-2603), and by directly downloading weights under the Apache 2.0 license.

A definitive strategic repositioning

When read together, these three announcements are not just isolated product outputs. They define a roadmap.

- Small 4 covers the business market with a versatile, high-performance, and cost-effective model.

- The Nemotron coalition gives Mistral a stake in setting global standards for open-source AI.

- Leanstral demonstrates a depth of R&D beyond chat models.

Mistral is targeting one billion euros in revenue this year, is building a data center in Sweden, signed a contract with the Ministry of Defense in January, and acquired Koyeb in February. It is achieving all this while maintaining a consistent open-source policy under the Apache 2.0 license. The remaining question is sustainability: How long can a hyper-growth company continue to release its models for free while financing the massive infrastructure needed to train them?

Comments

(3 Comments)